List of Abbreviations

ASoS Action Short of Strike

C&AG Comptroller and Auditor General

CCMS Council for Catholic Maintained Schools

CFS Common Funding Scheme

DE Department of Education

EA Education Authority

ETI Education and Training Inspectorate

EPPOC Executive Programme on Paramilitarism and Organised Crime

FSCN Full Service Community Network

FSES Full Service Extended Schools

FSME Free School Meal Entitlement

GCSE General Certificate of Secondary Education

LEO NI Longitudinal Education Outcomes Northern Ireland

LIT Local Impact Team

NAO National Audit Office

NI Northern Ireland

NIAO Northern Ireland Audit Office

NIESIS Northern Ireland Extended Schools Information System

NIMDM Northern Ireland Multiple Deprivation Measure

OBA Outcomes Based Accountability

OECD Organisation for Economic Co-operation and Development

PAC Public Accounts Committee

PISA Programme for International Student Assessment

SEN Special Educational Needs

SEND Special Educational Needs and Disability

SMART Specific, Measurable, Achievable, Realistic, Time-dependent

TSN Targeting Social Need

Executive Summary

1. The Department of Education (the Department) delivers a range of programmes and interventions intended to support improved educational outcomes for children and young people experiencing disadvantage. Following the Public Accounts Committee’s ‘Closing the Gap’ inquiry, the Department wrote to the Northern Ireland Audit Office in January 2024 identifying a number of specific interventions relevant to this objective. These included Targeting Social Need (TSN), Sure Start, the Extended Schools Programme, the Full Service Programme, the WRAP Programme, the Special Educational Needs and Disability (SEND) Transformation Programme and the former Education Transformation Programme.

2. These interventions account for significant annual expenditure and form key elements of the Department’s approach to addressing educational disadvantage. The education system is inherently complex, with multiple interdependent factors influencing a child’s learning and development. Outcomes are shaped by a wide range of social, economic, family and school level variables, many of which fall outside the Department’s remit. As a result, measuring the specific impacts of individual educational interventions can be challenging.

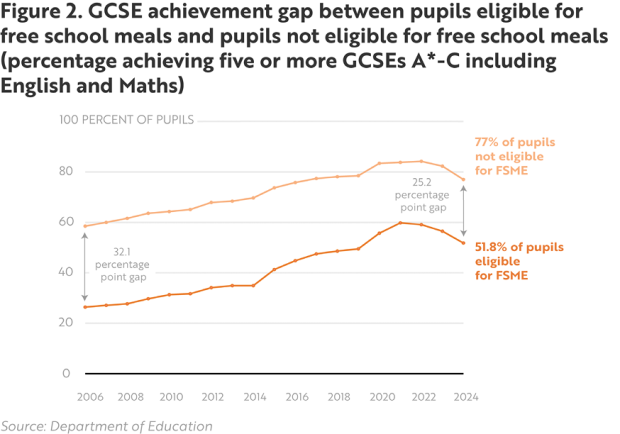

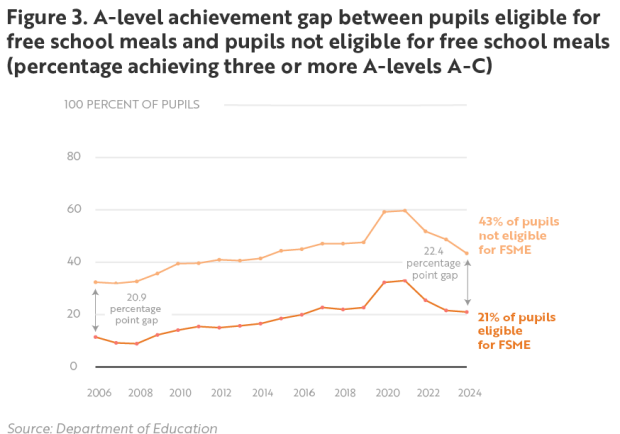

3. In the course of this review the Department has highlighted the long-term improvement in the rates of young people achieving five or more GCSEs between 2005/06 and 2023/24 as key evidence of the effectiveness of its overall programme of activities. Over this time there was an improvement from 52.6 per cent to 71.6 per cent. The Department has also highlighted data that shows the attainment gap between pupils entitled to free school meals and pupils not entitled to free school meals decreased by 6.9 per cent.

4. However, the Magenta Book emphasises the need for evaluation frameworks and data collection that can identify the contribution of individual interventions. System-wide performance measures cannot, on their own, isolate the specific impact of interventions. This report is intended to assess the arrangements the Department has in place to evaluate the effectiveness of individual programmes and interventions. The report does not seek to provide an overarching assessment of the collective effectiveness of these programmes and interventions. Whilst we have found the Department has made efforts to improve some aspects of its evaluation practice, we also identified weaknesses within these arrangements. These weaknesses significantly reduce the Department’s ability to demonstrate whether its individual interventions are effective in reducing educational inequalities.

Strategic Approach to Evaluation

5. Although these interventions share a common objective, the Department does not have a unified evaluation framework to coordinate the evaluation activities. Such a framework would allow the Department to establish the specific contribution of individual programmes and interventions to strategic objectives, while also outlining core and consistent performance measures and definitions. As a result, evaluation practices have evolved independently across the different programmes as and when they are developed or have been subject to some form of review. This has resulted in fragmented and inconsistent approaches that do not support coherent analysis of the effectiveness of the Department’s overall investment in reducing educational disadvantage.

6. The implications of this fragmented approach are most evident in the Department’s largest and longest-standing interventions. TSN, with annual expenditure of approximately £75 million and cumulative expenditure of over £1 billion since 2005, has not been evaluated in a manner capable of determining its impact on the outcomes of disadvantaged pupils. The Northern Ireland Audit Office (NIAO) 2021 report, ‘Closing the Gap’, found that the Department does not have data to clearly demonstrate if the funding has improved the performance of pupils with free school meal entitlement. Sure Start has operated for over two decades and, while evaluation was limited in its ability to demonstrate long-term educational impact, we have noted improvements in its evaluation since 2015.

Programme-Level Planning and Business Cases

7. We found that neither TSN nor the Full Service Programme were supported by a business case. Since our review, the Department has rectified this in respect of the Full Service Programme. Given the scale and importance of these programmes, the absence of a business case represents a fundamental gap in programme design and oversight. The development of business cases is a core requirement of the guidance which the Department is required to adhere to within its programme management. Without such documentation, key elements, including programme rationale, intended outcomes, performance indicators, data needs and evaluation cycles, are not clearly defined.

8. Where business cases exist for other programmes, with the exception of Sure Start, they do not consistently reflect the guidance that the Department is required to adhere to. Objectives are often broadly stated and not supported by measurable indicators, baseline information is limited, and evaluation arrangements are not presented as integral components of programme design. These issues restrict the Department’s ability to monitor performance effectively and limit opportunities to use evaluation to inform continuous improvement.

Stakeholder Engagement

9. Stakeholder engagement is an essential component of designing meaningful and proportionate evaluation arrangements. The guidance framework requires that the Department ensures it involves delivery partners, service users and other stakeholders when defining programme objectives, identifying relevant outcomes and designing data collection methods. However, we found that stakeholder engagement at this stage within the Department’s evaluation arrangements is limited.

10. During this review the Department were able to identify a number of different evaluations that had been undertaken with the support of a range of external stakeholders. These examples included the establishment of a cross-sector programme board during the scoping of the SEND Transformation Programme and the commissioning of research examining deprivation measures. The Department also presented its commissioned review of Sure Start by Ipsos as another positive example of stakeholder involvement. The Department also provided some evidence that discussions took place with delivery partners on how to improve the format and content of evaluation reports for the Full Service Programme and WRAP.

11. However, we consider these examples were not representative of a consistent approach to involving stakeholders in all stages of evaluation practices. Stakeholder involvement was often focused on operational reporting rather than on shaping how evaluation was to be undertaken. Expanding and formalising stakeholder engagement at the earliest stages of programme and intervention design would strengthen the quality and relevance of evaluation and improve understanding of programme effects at local level.

Data Collection and Evaluation Methodologies

12. The Department’s TransformED Strategy (2025) states “it is important to improve data on system performance to support an effective and evidence-based system, with professional collaboration and evidence-rich discussion and collaboration at its heart”. In this review we identified variation in the quality, reliability and completeness of data collected across interventions. Programmes rely on a mixture of quantitative data, qualitative feedback, case studies, internal monitoring reports and self-assessment tools. While each of these sources can provide useful insights, they have not been woven together into a comprehensive, coherent or comparable evidence base.

13. TSN represents the most material gap. Despite the scale of investment, data collection through the TSN Planner remains limited. Completion rates are low and reporting is heavily reliant on self-assessment. Ongoing industrial action in the form of Action Short of Strike (ASoS), combined with the impact of COVID-19 restrictions, has created substantial gaps in the Department’s evidence base, due to the absence of statutory assessment data. Over the past decade, teaching unions have instructed members not to implement departmental initiatives that are not formally agreed, which impacted on the numbers of schools that completed the TSN Planners.

14. The information provided through the TSN Planner describes the types of activities funded but does not measure outcomes or demonstrate whether disadvantaged pupils have benefited. This is inconsistent with the Magenta Book guidance for defined evaluation metrics and mechanisms capable of clearly demonstrating the impact of TSN funding.

15. In other programmes, such as Extended Schools and WRAP, data collection is more structured, but there is limited evidence of independent validation or systematic quality assurance. Qualitative information features prominently across programmes, yet in many cases it is not supported by quantitative outcome measures that could enable more robust assessment of programme effectiveness.

16. Some programmes have introduced recognised tools to strengthen aspects of evaluation. Sure Start has implemented early years assessment systems and now reports through Outcomes-Based Accountability scorecards. Extended Schools has access to a structured online reporting platform. However, these improvements have not been extended across the full range of programmes contributing to the Department’s strategic objective. In addition, financial information is not consistently integrated with outcome reporting, limiting the Department’s ability to undertake value for money assessments that consider both programme costs and the results achieved.

Communication of Evaluation Findings and Shared Learning

17. We found that evaluation findings, where produced, are not communicated consistently or systematically. While Sure Start stands out as an example of better practice, with published scorecards and multiple inspectorate reports, most other programmes publish limited evaluative information. This restricts transparency and limits opportunities for shared learning within the Department and across the education sector. The absence of accessible evaluation outputs reduces the ability of schools, delivery partners and policymakers to identify effective practice, understand programme impacts or consider how approaches could be replicated or improved. It also limits the ability of the Department to demonstrate to the public and the wider government system how significant resources are being used to support disadvantaged children and young people. This is particularly important given findings from A Fair Start (2021) and the Independent Review of Education (2023), supported by the 2018 Programme for International Student Assessment (PISA) Study, which show that Northern Ireland compares favourably with other OECD (Organisation for Economic Co-operation and Development) countries, with a smaller disadvantage attainment gap. Establishing clearer links between interventions and their impacts could therefore generate valuable learning for schools and stakeholders both within Northern Ireland and beyond.

Value for Money Conclusion

18. Overall, we found that the Department cannot currently demonstrate, at a programme or intervention level, that intended outcomes are being achieved or value for money delivered. Significant investment continues to be made across multiple interventions and programmes without a sufficiently robust evidence base. Opportunities exist to strengthen coordinated evaluation arrangements, data collection, stakeholder engagement and communication structures. Enhancing these areas would support the Department in assessing performance, improving programme effectiveness and making informed decisions about future investment. Strengthening evaluation arrangements is essential to ensuring that resources are directed towards interventions that make the greatest difference to disadvantaged children and young people. Enabling the Department to provide meaningful assurance on the effectiveness of its approach.

List of recommendations

Recommendation 1

The Department should review its evaluation arrangements for programmes linking to overcoming educational disadvantage.

This review should ensure these arrangements are coherent, proportionate and provide comparable evidence across the full range of programmes the Department delivers towards this objective.

Recommendation 2

The Department should ensure that each of its programmes is supported by evaluation frameworks that are consistent with guidance and best practice.

These frameworks should clearly articulate the specific objectives of each programme, its measurable performance indicators, and the evaluation cycles that will be applied.

Recommendation 3

The Department should ensure that when it is designing evaluation arrangements for future programmes, these are supported by meaningful stakeholder engagement.

Recommendation 4

The Department should review and strengthen the various self-assessment approaches used across different programmes to ensure that, where possible, these are consistent and allow for appropriate quality assurance and validation.

Recommendation 5

The Department should review and strengthen its arrangements for collecting data used to evaluate these programmes and ensure that it has systems and processes in place, supported by robust data quality assurance arrangements, that allow it to efficiently gather appropriate quantitative information.

Recommendation 6

Publishing evaluation outputs consistently, linking data to outcomes, and sharing lessons learned with stakeholders would strengthen governance and support evidence-based decision-making.

The Department should ensure that it has appropriate mechanisms in place to provide internal decision-makers with evidence about the effectiveness of programmes on an ongoing basis. Where evaluation identifies good practice, the Department should ensure its processes allow this to be shared within the education sector and, if appropriate, with other government departments.

Meaningful annual performance reports should be published to provide the taxpayer with detailed information on the cost, performance and impact of individual interventions and programmes delivered by the Department.

Part One: Introduction

1.1 Evaluation is a critical function of effective government. Good evaluation provides a systematic basis for the assessment of whether the expenditure incurred by government departments is effectively supporting delivery of the intended outcomes. It enables departments to determine whether individual programmes should be continued, scaled up, reconfigured or discontinued. Evaluation is also a key enabler of transparency and accountability, highlighting where public money has or has not made a difference to society.

1.2 Robust programme evaluation is critical for departments to demonstrate accountability, assess effectiveness, and inform evidence-based decision-making. Without structured evaluation frameworks, departments risk delivering initiatives that lack clear measures of success, limiting transparency and the ability to demonstrate value for money. Comprehensive evaluation ensures that outcomes are aligned with strategic objectives and provides assurance that resources are being used efficiently to achieve intended impacts.

1.3 Despite these clear benefits, evaluation has been challenging for public sector organisations to implement effectively. A recurring issue highlighted in NIAO reports on public services has been limitations in data and/or evaluation. Similarly, the National Audit Office, in its 2021 overarching review of evaluation across Westminster departments, noted that “despite government’s commitment to evidence-based decision-making, much government activity is either not evaluated robustly or not evaluated at all.”.

Evaluation is required in Northern Ireland

1.4 Since 2020, public bodies in Northern Ireland have been required to ensure their evaluation approach is based on the guidance set out in the Department of Finance’s Better Business Cases NI. This provides Northern Ireland departments with guidance that must be applied for the development and review of project and programme business cases. Northern Ireland bodies can also draw upon guidance published by HM Treasury: Better Business Cases: for better outcomes.

1.5 A key principle is that business cases should be treated as “thinking exercises” that will provide a repository of evidence to support decision-making through the entire lifetime of a programme or intervention. It is critical business cases are not conceived of as being simply a document for approval at a point in time. They should be living documents, reviewed and updated throughout programme life cycles. Programme evaluation should be planned from the outset and embedded within the business case. Without this, the Department’s ability to assess effectiveness and value for money is significantly constrained.

1.6 There are a number of further guidance documents that provide direction which Northern Ireland bodies should adhere to. The HM Treasury Green Book provides instruction on appraisal and evaluation which, like the Northern Ireland guidance, uses the Five Case Model (strategic, economic, financial, commercial and management) to guide business case development. The HM Treasury Magenta Book provides guidance on what to consider when designing an evaluation, types of evaluation, evaluation approaches and the main stages of developing and delivering evaluation.

1.7 There is also established best practice from an audit perspective on the key characteristics of an effective departmental approach to evaluation. The National Audit Office’s (NAO) ‘Evaluating government spending: an audit framework’ sets out the four such characteristics of an effective departmental approach to evaluation:

- Strategy: Departments should have a single evaluation strategy, or framework, that aligns with their strategic objectives. The education programmes should be evaluated against the strategic objectives of the Department of Education. The rationale behind the programmes should be informed by outputs and learning from previous evaluations of what has worked and why.

- Planning: Evaluation should be incorporated throughout the policy life cycle. The Department should have a clear vision of how it will measure success, and how it will ensure appropriate resources are available to undertake evaluation. Stakeholders should be consulted in the planning of evaluation.

- Implementation: Implementation of evaluation practices is critical to ensuring that funding programmes achieve their intended outcomes and adapt to changing needs. Appropriate evaluation methodologies should be utilized, including robust data collection and analysis procedures, quality assurance processes and good project management practices.

- Communication: Effective communication of evaluation findings is essential for transparency, accountability and continuous improvement. Evaluation findings should be communicated to senior leaders at key decision points. Findings should be published and shared with stakeholders in a timely manner and lessons learned should be easily accessed and shared.

The Public Accounts Committee criticised the Department of Education’s approach to evaluating two of its key interventions

1.8 In May 2021, the NIAO published ‘Closing the Gap - Social Deprivation and Links to Educational Attainment’. Following this report, the Public Accounts Committee published its ‘Closing the Gap’ report in January 2022. Both reports focused on two key interventions delivered by the Department of Education intended to improve educational outcomes for children from socio-economically disadvantaged background: Targeting Social Need (TSN) and Sure Start.

1.9 The 2022 Public Accounts Committee report found that the Department of Education did not have data to demonstrate if the £913 million of TSN funding provided since 2005 had improved the exam performance of Free School Meal Entitlement (FSME) pupils. The positive impact of Sure Start was noted, but attendance information was only collected by the Department from 2015-16, even though the programme had been in place for over 20 years.

1.10 Subsequently, in January 2024 the Department provided the Committee with correspondence identifying ten programmes and policies it had implemented which were related to the objective of addressing socio-economic educational disadvantage (see Figure 1). Following receipt of this correspondence, the Committee requested that the NIAO undertake a review of the arrangements in place to ensure that these programmes were achieving value for money.

Figure 1 Overview of the Education Programmes

Programme/ Intervention | 24/25 Funding | Total Spend | Description |

|---|---|---|---|

| Targeting Social Need (TSN) | £75 million | approx. £1.4 billion | Introduced in 1991, been in its current format since 2005. Funding is allocated as part of core school budgets, via the Common Funding Formula, in recognition of the additional challenges and costs involved in supporting children and young people from socio-economically disadvantaged backgrounds |

| Sure Start | £34 million | £326 million from 2014 | Introduced in 2000, consists of 38 projects to deliver services to children in 25 per cent most disadvantaged areas |

| Extended Schools Programme | £8.1 million | £167 million | In place since 2006, funding to schools for after-school activities, clubs and additional support programmes |

| Full Service Programme | £366,000 each | £5.2 million from 2018 | Full Service Extended Schools (FSES) in Boys’ Model and Model Girls Schools in North Belfast, ongoing since 2006. Full Service Community Network (FSCN) based in the Ballymurphy area of West Belfast, ongoing since 2009 |

| WRAP Programme | £618,000 | £3.3 million | Launched in 2019, a flexible, wraparound education service for children and young people to deliver against both Department of Education and Executive Programme on Paramilitarism and Organised Crime (EPPOC) objectives, in four specific geographical areas, delivered by four delivery partners |

| SEND Transformation Programme | £1.4 million | £11.3 million | Introduced in 2020, the SEND Strategic Development Programme (SEND SDP), like the Education Transformation Programme, consisted of EA and DE led projects. Due to funding constraints, in 2022, the programme was reconfigured to facilitate the delivery of the EA’s Local IMPACT team project. The Local IMPACT Team project is an action under the SEN Reform Agenda and Delivery Plan announced by the Minister in February 2025 |

| Education Transformation Programme | Closed in March 2021 | £5.7 million | Commenced in April 2018, suspended in March 2020 due to the impact of COVID-19 and the need to redeploy staff to other business-critical areas and formally closed in March 2021. At the closure of the Transformation Programme, a post-project evaluation and closure report was completed for each project. A paper was prepared in July 2021 to set out the position of each of the projects, i.e. complete, continuing or ceasing. This programme is the only one of the seven that has closed |

Note: In addition to these seven programmes the Department’s correspondence referred to three further schemes: Count, Read, Succeed, a literacy and numeracy strategy that ran from 2011 to January 2025 and did not have a funding stream attached; The Reducing Educational Disadvantage Programme, now the RAISE Programme which, at the time of research for this report, had not entered funding phase, and Collaborative Test and Learn Programme, a cross-departmental programme, not a specific education programme.

Source: Department of Education

Scope and structure

1.11 This report examines the evaluation arrangements that the Department has implemented in relation to the list of programmes referred to in Figure 1. This report does not provide a full detailed assessment on whether each of the individual programmes reviewed is achieving value for money. Instead, it assesses the extent to which the Department has adequate evaluation arrangements in place for it to be able to assure itself, and other stakeholders, on an ongoing basis, that the investment it makes in each of these programmes represents value for money.

1.12 To do this we completed a high-level assessment, based on review of key project management documentation, of the extent to which the arrangements in place complied with established best practice and guidance for effective programme evaluation. Our assessment in this report has been framed around what best practice identifies as being four key characteristics of an effective evaluation environment.

1.13 Our report is structured in the following way:

- Part Two details our assessment of the Department’s strategic approach to, and planning of, evaluation.

- Part Three covers the Department’s implementation of evaluation and communication of its findings.

Part Two: Strategy and planning

2.1 Implementing a strategic approach to evaluation is essential to ensure that all activities which share a common overarching objective are appropriately coordinated. Where multiple programmes or activities contribute to the achievement of a complex objective, departments should regularly assess and seek to understand the contribution of each individual part. Adopting an appropriately strategic approach helps ensure that the Department can undertake the scale and type of evaluation needed to provide sufficient and timely evidence about its complex interlinked work.

2.2 At the operational level of individual programmes and activities, evaluation should be planned from the outset and designed to assess their effectiveness throughout their entire lifecycle. This means setting clear objectives, defining measurable outcomes, and establishing mechanisms to track progress and impact over time. By embedding evaluation into programme design, the Department can generate robust evidence to determine whether a programme delivers value for money and aligns to strategic priorities.

2.3 This section of the report provides an overview of how effectively the Department has adopted a strategic approach to evaluating the programmes and planned for actual evaluation and appraisals to occur.

The Department does not have a strategic approach to evaluating the impact of programmes that have a shared objective

2.4 The programmes subject to this review contribute to a common objective of ensuring that educational outcomes for children can overcome socio-economic disadvantage. Given this, the evaluation of these schemes should be coherently designed to assess the collective and individual impact and value of the interventions and programmes. This is particularly important give the high level of complexity relating to these interventions, and the inherent challenge in measuring impact over time.

2.5 However, the Department has not developed a coordinated approach to evaluating the impact of its programmes and interventions on overcoming disadvantage. Nor has it established structured linkages between evaluation activities across the various programmes reviewed. This limits its ability to assess their effectiveness and weakens its capacity to demonstrate value for money. The Department highlighted to us that it publishes, on an annual basis, the school leavers survey which provides, a system level view of GCSE and A-level performance (or equivalents) for all pupils. The Department considers that this data provides an indication that the totality of investment made in education is deriving positive benefits for pupils (see Figures 2 and 3). In our view system-wide data like this cannot provide a sufficient basis for the evaluation of individual interventions and programmes.

2.6 The lack of an embedded strategic approach is most evident in respect of the longest standing and most expensive intervention subject to this review: TSN funding represents one of the Department’s most significant interventions, costing approximately £75 million annually. Its primary aim is to improve outcomes for pupils from socially disadvantaged backgrounds and narrow the attainment gap between the most and least deprived. In 2021, the NIAO ‘Closing the Gap’ report found that, “despite providing £913 million of TSN funding since 2005, the Department does not have any data to clearly demonstrate if this funding has improved the performance of pupils with FSME.”

2.7 Similarly, Sure Start has been a long-term programme supported by the Department where evaluation was limited and largely focused on operational delivery rather than long-term impact. There is yet to be a consistent mechanism to assess whether Sure Start interventions translate into improved educational attainment as children progress through the education system. However, we have noted that the Department has commissioned a number of independent reviews relating to Sure Start and has also begun towards working towards implementing systems that will allow for the collection of data that allows long-term research of participants’ progression from education into employment (see paragraph 3.7). The Department also refers to 2025 research by the Institute for Fiscal Studies (IFS) on Sure Start in England that highlighted long term benefits for children and families in areas where the programme was most intensively delivered.

2.8 Evaluation for Extended Schools Programme, the Full Service Programme and WRAP tend to focus on reporting number and types of activities delivered, narrative updates and case studies. The Department has stated that the variation of provision across these programmes makes strategic evaluation very challenging. These programmes contain multiple and varied strands aimed at improving outcomes for disadvantaged children.

2.9 Magenta Book guidance highlights that, as far as possible, programmes with related aims should use common measures to align activities with intended outcomes and the Department’s wider strategic direction. While the existing evaluative practices for the three programmes provides useful information. Establishing a more systemic, consistent evaluation framework, including shared outcome indicators, would enable more robust assessment of how each programme contributes to improvements in pupil attainment, wellbeing and progress against departmental priorities.

2.10 The SEND Transformation Programme aims to improve outcomes for children with special educational needs and disabilities. Research by the Equality Commission for Northern Ireland in 2024 found that children with SEN from socio-economically disadvantaged backgrounds are particularly vulnerable to poorer educational outcomes. While the programme addresses a key strategic priority, the link between SEN and socio-economic disadvantage is not specifically addressed within the Transformation Programme.

Recommendation 1

The Department should review its evaluation arrangements for programmes linking to overcoming educational disadvantage.

This review should ensure these arrangements are coherent, proportionate and provide comparable evidence across the full range of programmes the Department delivers towards this objective.

Two of the seven programmes reviewed did not have a business case

2.11 Whilst strategic planning of evaluation should be developed at a Departmental level, this must still be supplemented by lower-level operational planning of evaluation at individual activity or programme level. The Green Book requires that monitoring and evaluation are integral parts of the development and planning of a programme or activity. This helps the organisation ensure several things:

- that the Department has a good understanding of what it aims to achieve,

- that appropriate and proportionate evaluation will occur at the right times in the programme’s lifetime to provide meaningful information.

- That the Department will be capable of adjusting its planned activities as a result of evidence that emerges during this lifetime;

- that the Department has sufficient capacity and capability to undertake the level of evaluation that is needed; and

- that stakeholders have the opportunity to offer constructive suggestions as to how the intended approach can be improved before it is implemented and operational.

2.12 It is a fundamental requirement of Better Business Cases NI that significant expenditure streams are supported by business cases. However, whilst in this review we found that some programmes do demonstrate good practice, this was not consistently achieved across all seven programmes. Most critically, high-cost, long-standing initiatives such as TSN and Full Service were not supported by fully developed business cases.

2.13 The absence of TSN and Full Service business cases means we cannot comment on how the Department originally planned to evaluate these initiatives or whether evaluation was appropriately considered at the outset. This lack of structured planning is especially concerning given TSN’s cumulative expenditure of approximately £1 billion since 2005 and its key role in addressing educational disadvantage.

2.14 Introduced in 1991, TSN predates the Common Funding Scheme and in practice is considered and treated more as being a portion of “business as usual” funding for school budgets, as opposed to funding targeted at specific activities to achieve its original objectives. Consequently, TSN is not evaluated in isolation and there is very little internal evaluation documentation available. Previous external review by the NIAO and the PAC criticised the lack of demonstrable impact this funding is having. The Independent Review of Education (2023) stated that currently there is no way of assessing how TSN money is spent by schools and whether the specific pupils it is intended to support benefit. In our view the development of an appropriate business case for this expenditure would have helped the Department ensure that it set clear objectives, measurable indicators, and appropriate evaluation cycles for this expenditure.

2.15 Full Service Programme is closely linked to the Extended Schools programme. Through the Education Authority (EA) and the Council for Catholic Maintained Schools (CCMS), the Department supports full service provision in two communities with high levels of social deprivation. The programme delivers additional activities designed to raise educational attainment. The Full Service Programme has two components; Full Service Extended Schools (FSES), launched in 2006 at Boys’ Model and Model Girls’ Schools in North Belfast; and Full Service Community Network (FSCN), established in 2009 in the Ballymurphy area of West Belfast. In 2024/25, the Full Service programme received £732,000 in funding, £366,000 allocated to FSES and £366,000 to FSCN.

2.16 The Department acknowledged in 2025 that the Full Service programme lacked a business case. It worked with the CCMS and Model Schools to have one in place in 2026. No similar commitment has been made for TSN as the Department states it is part of the Common Funding Formula, many schools use it as part of their core budgets and as such there is no way of assessing how TSN funding is spent by schools. Due to the lack of a business case, neither TSN nor Full Service have post-project evaluations to provide assurance on effectiveness or value for money.

Recommendation 2

The Department should ensure that each of its programmes is supported by evaluation frameworks that are consistent with guidance and best practice.

These frameworks should clearly articulate the specific objectives of each programme, its measurable performance indicators, and the evaluation cycles that will be applied.

Where business cases do exist, they lacked sufficient detail on measuring effectiveness

2.17 The other programmes subject to this review did have business cases in place. These were based on the Better Business Case Guidance and included provisions for setting objectives using the SMART methodology (Specific, Measurable, Achievable, Realistic, and Time-dependent). They also encourage programmes to define outcomes through three core evaluation questions - How much did we do? How well did we do it? Is anyone better off?

2.18 Across the various programmes, we found areas where the business cases could have been improved to better comply with guidance. For example, neither the business case nor blueprint documents for the Education Transformation Programme, which closed in 2022, contained detail on how the outcomes would be measured to determine the programme’s effectiveness. This omission illustrates the risk of inconsistent planning and its impact on the Department’s ability to assess value for money. In response to this issue the Department explained that the Education Transformation Programme consisted of a range of projects, each with its own approach to benefits realisation and measurements.

2.19 While we found that WRAP was subject to considerable monitoring and evaluation arrangements, the business case underpinning this evaluation recognise that they rely heavily on self reported case studies and narrative accounts. It also acknowledges that some baseline measures for targets could not be established, that certain targets lack specificity, and that reporting requirements may be more burdensome for delivery partners than beneficial in assessing effectiveness. The business case concludes that strengthening data driven evaluation, improving target specificity, and expanding the range and quality of measurable indicators would enhance the reliability of demonstrated outcomes and reduce dependence on self reporting. Similar issues are noted in the Extended Schools business case which acknowledges that mechanisms have not been independently assessed since the programme began in 2006. It is welcome to note that the Department intends to commission an independent review to inform future delivery.

Stakeholders could be more meaningfully engaged in the design of future evaluation activities

2.20 The Magenta Book guidance emphasises that evaluation design should be built around stakeholders to ensure relevance. Whilst not identifying a specific list, the Book articulates a wide interpretation of stakeholders as being all those that may have an interest in, are affected by, or can influence the design, delivery or outcomes of a programme. Within this context there are a wide range of stakeholders that may be able to offer the Department useful insight on its evaluation approaches. These include pupils, schools, delivery partners, parents, policy experts, academics, and other government departments in Northern Ireland or other jurisdictions.

2.21 During our review we found evidence of external stakeholders making a general contribution to evaluation by providing useful examples of high level best practice. For example, the 2020 ‘10 Features of Effective Schools - “STAR” Case Studies’ Paper prepared by the Department following meetings with schools and contributions from policy stakeholders, including the Education and Training Inspectorate. It identified a number of common characteristics of eight post primary schools demonstrating success in addressing educational disadvantage. Likewise, research undertaken by Stranmillis University College in September 2025, ‘Effective School Leadership in Disadvantaged Communities’, examined 13 high performing post primary schools with significant levels of Free School Meal Entitlement. These stakeholder-led studies offer valuable insights, and the learning generated from such work should inform the design of programme evaluations to ensure alignment with recognised guidance and good practice.

2.22 There was also some evidence of stakeholders being used to enhance the quality and implementation of evaluation during the life cycle of programmes and interventions. For example, the 2022 PAC ‘Closing the Gap’ report recommended that the Department review the suitability of the Free School Meal Entitlement (FSME) as the measure of social disadvantage. In response the Department commissioned independent research from Ulster University, the subsequent 2024 report concluded that FSME can be broadly considered equal or superior to most other commonly used proxies of household/school-level deprivation. The report did not identify a superior alternative to FSME. This approach ensured that the review was comprehensive, impartial, and informed by expert analysis. While the review demonstrated engagement, it was limited to this single issue. Future evaluations could benefit from ongoing stakeholder involvement.

2.23 Sure Start has been subject to a number of other external views, including the Ipsos UK ‘Review of DE Targeted Early Years Interventions’ (2024). One of the issues identified in the report was that strategic stakeholders and practitioners involved in the management of Sure Start felt the administration and oversight processes were appropriate overall but there was scope to reduce bureaucratic burdens and ensure efficient use of resources. The review also determined that the Northern Ireland Multiple Deprivation Measure (NIMDM) remains the most appropriate mechanism for targeting Sure Start resources. This reflects good practice in involving stakeholders not only in reporting but in shaping evaluation design and using findings to improve delivery.

2.24 The introduction of Outcomes Based Accountability (OBA) evaluation within Sure Start was the result of a review commissioned by the Department, which found inconsistencies across projects on when data was being collected and the nature of the data collection. The introduction of OBA reporting was intended to strengthen the link between objectives and measurable outcomes by focusing on the core evaluation questions: How much did we do? How well did we do it? Is anyone better off? This reflects a move from process-driven reporting to outcome-focused accountability in the planning for evaluation of Sure Start.

2.25 Furthermore, the Department has commissioned five Education and Training Inspectorate evaluations of the Sure Start programme since 2018. These include a review of service delivery during COVID-19, which engaged stakeholders and assessed monitoring processes (see box below). In addition, the Department commissioned an Education and Training Inspectorate review of a specific aspect of the programme, the parental engagement with the Development Programme for two to three year olds.

Evaluation Reports of Sure Start by the Education and Training Inspectorate (ETI)

2018 – Sure Start Evaluation Report

Focused on the effectiveness of the Sure Start projects to promote the development of children’s speech, language and communication skills and support parents/carers in their role. The report identified key strengths and areas for improvement in the outcomes and provision for children and parents, leadership and management, and recommendations to inform future actions.

2019 – Second Sure Start Evaluation Report

Built on the evidence base of the 2018 report to confirm the strengths and the areas still requiring improvement.

2020 – An evaluation of improving practice in Sure Start

Focused on how Sure Start projects use self-evaluation to bring about improvements in the quality of provision and outcomes for children and parents. The report found that projects demonstrated a reflective approach and a commitment to strengthening leadership, provision, and outcomes for children and families. The report highlights examples of effective practice that can inform regional learning.

2021 – Thematic Report on Sure Starts’ planning, delivery and monitoring of services to children and families during COVID-19

Found that projects demonstrated flexibility in planning and delivering services during COVID-19. Sure Start projects adapted planning, delivery and monitoring of services for children and families during the COVID-19 pandemic. Based on evidence from 38 projects, the evaluation considered the adaptability, innovation and resilience of staff in maintaining services for children and families during unprecedented disruption.

2025 – An evaluation of Sure Start’s Developmental Programme for 2 to 3-year-old children and the effectiveness of its play-based activities in achieving positive outcomes for the children’s development

Focused on five of the 38 projects and delivery in 16 settings not subject to four previous ETI evaluations. The evaluation found that, overall, the programme is successful in meeting its outlined aims, with clear benefits for children, families and staff. The children are provided with meaningful play-based experiences which are supporting them to develop as confident and resilient learners who are well prepared for their pre-school education. An area of learning to note is that ETI found the reports submitted by the projects do not identify what changes and improvements are required to support Sure Start, nor the professional learning needs. The impact of actions taken to address issues is not clear from the reports. ETI found that use of the Sure Start Go database, used to collate information from the annual report cards submitted to the Department, varies across projects and a review is required.

2.26 The WRAP programme has shown responsiveness to stakeholder needs, notably by reducing administrative burdens for voluntary sector partners, replacing written updates with verbal reporting, and by maintaining regular engagement through 34 meetings with its four delivery partners. Current monitoring and evaluation arrangements are extensive, linking actions to targets where quantitative reporting is feasible, using case studies and feedback from children and parents. The Department stated that as the four WRAP programmes are very different, it has been very challenging to design an evaluation template to cover them all.

2.27 The SEND Transformation Programme offers a positive example of stakeholder engagement during its scoping phase, establishing a cross-sectoral Programme Board in 2020. The Minister’s SEN Reform Agenda (2025) introduced a five-year delivery plan and outcomes framework, including population indicators to measure progress. This approach demonstrates how stakeholder-informed priorities can shape evaluation design. Future initiatives could build on this model by embedding collaborative processes earlier and more consistently across programmes.

2.28 However, despite these examples we did not find extensive evidence of external stakeholders being involved in the initial design of evaluation when programmes were being developed and planned. Ensuring such engagement takes place at the early stages of programme design can help ensure that evaluation activities are meaningful and useful.

Recommendation 3

The Department should ensure that when it is designing evaluation arrangements for future programmes, these are supported by meaningful stakeholder engagement.

Part Three: Implementation and communication

3.1 Implementing effective evaluation on an ongoing basis requires the Department to ensure that it has appropriate methodologies and prioritises gathering the evidence it needs to support robust and timely analysis. This requires good project management and quality assurance arrangements to be in place to control the gathering and use of data and other evidence.

3.2 Good practice guidance emphasises that findings should be communicated to senior leaders at key decision points, published in a timely manner, and shared with stakeholders in accessible formats. Lessons learned should be easy to access and inform future decision-making. Without clear and consistent communication, evaluations risk becoming a compliance exercise rather than a tool for improvement.

3.3 This section of the report considers the extent to which the Department’s implementation of evaluation has achieved the standard set in good practice, and supports a robust assessment of whether value for money is being achieved.

There are significant challenges in relation to the collection of data

3.4 The Department recognises that data collection is a significant challenge across the system. The TransformED Strategy in 2025 states “it is important to improve data on system performance to support an effective and evidence-based system, with professional collaboration and evidence-rich discussion and collaboration at its heart.” The health of data collection across the programmes presents a mixed picture. TSN stands out as the weakest area.

3.5 The Department’s approach to collecting data relating to TSN is based on the TSN Planner tool This was introduced in 2018, as part of an effort to improve data collection. The annual TSN Planner Report is compiled from the data submitted by schools through the TSN Planner. Since its introduction this survey has been beset by very low levels of completion by schools. Six per cent of schools completed the TSN planner in 2018/19 and around 40 per cent in 2024/25. The Independent Review of Education described this as a loss of “an enormously valuable learning opportunity.”

3.6 A key cause of the poor completion rates in recent years has been the instruction issued by teaching unions to members to not implement new or existing departmental policies, initiatives, or working practices that have not been formally agreed as part of its Action Short of Strike (ASoS) approach. The TSN Planners have been considered to fall within this categorisation. By 2019 all five teaching unions were participating in ASoS, and although this extensive period of action was temporarily halted by the 2020 Pay and Workload Agreement, engagement was impacted by the disruption caused by COVID-19. The return to ASoS in late 2022, and again in early 2025, has constrained the Department’s ability to secure consistent completion of TSN Planners across the school system.

3.7 Since 2015 the Department collected data identifying whether children entering school had attended Sure Start. Due to industrial action, the Department advised that this data is not currently available to it. The Department told us that once data access issues are resolved, this data will enable future analysis of educational attainment. The Department have also advised us that data collected from Sure Start is being incorporated into the Longitudinal Education Outcomes (LEO NI) database, which will allow longer term research into participants’ progression from education into employment. These developments, when implemented, will be welcome in empowering the Department to make more informed evaluations of the ways in which Sure Start contributes to reducing disadvantage and inform decisions on resource allocation.

3.8 Extended Schools collects data through the Northern Ireland Extended Schools Information System (NIESIS), an online platform developed by the EA to support the evaluation and administration of Extended Schools. Annually, approximately 80 per cent of schools submit their reports on funding use and impact. Compliance, although better than TSN, is not universal across all schools who receive funding.

Recommendation 5

The Department should review and strengthen its arrangements for collecting data used to evaluate these programmes and ensure that it has systems and processes in place, supported by robust data quality assurance arrangements, that allow it to efficiently gather appropriate quantitative information.

The Department has tended to place heavy reliance on self-assessment methodologies for evaluation

3.9 During our review of the data that was gathered that there was often a heavy reliance placed on self-assessment and qualitative analysis. As noted at paragraphs 2.4 – 2.13, we did not find that differences in methods could be traced back to a coordinated approach that was ensuring alignment and proportionality at a strategic level.

3.10 In respect of TSN, the Planner returns provided by schools summarise spending patterns into categories known as target headings. Schools identify targets, allocate resources and rate impact these resources have had on a five point scale (1 = very low impact, 5 = very high impact). For example, in the 2024/25 report, the most common areas of spend by target selected by schools were:

- ensure appropriate support for SEN pupils – £7 million;

- increase the number of pupils reaching their potential – £5 million; and

- ensure teachers have the requisite knowledge to provide appropriate interventions – £2 million.

3.11 The average impact score within the returns that had been received for the 2024/25 year by April 2025 was 4.4 out of 5. In our view the self-reported scores provide some insight but can be vague and unfocused and offer little evidence on whether interventions achieve intended outcomes or reduce educational inequalities. While the TSN Planner provides insight into spending and interventions, it does not fully address key evaluation questions:

- Are interventions achieving intended outcomes?

- Is TSN reducing educational inequalities?

- What lessons can be learned for future policy?

3.12 Furthermore, within the returns schools are not required to demonstrate that TSN funding specifically benefits pupils from socially deprived backgrounds, despite the objective and aim of the funding to improve educational attainment for socio-economically disadvantaged children and young people. TSN Planner reports from 2022 to 2025 show that around 50 per cent of pupils supported by TSN funding are FSME and around 30 per cent are pupils with SEN.

3.13 In relation to Sure Start we found evidence the Department has made some improvements in terms of improving the quality of data. These were initially driven by the independent review in 2015, which highlighted inconsistencies in outcome data collection. The review noted that:

“the fact that most Sure Start Project evaluations are internal means they are not providing an independent view…the evaluations differ in what they have measured and how the data has been collected and therefore it is not possible to determine the findings from these evaluations at an overall Programme level.”

In response, the Department introduced an Outcomes Framework and rolled out recognised measurement tools (Wellcomm Early Years Speech and Language Toolkit (2016/17) and Outcomes Star toolset (2019/20)), and the Outcomes Based Accountability Scorecards began to be published in 2018/19.

3.14 While we consider the adoption of OBA reporting to reflect an improvement in evaluation arrangements, we noted that these reports have not included any financial analysis. Understanding how much is being spent on delivering the activities reported within an OBA framework is integral to the OBA process. Financial information should be included as part of any evaluation, to support the assessment of whether value for money is being achieved.

3.15 The reliance on self-assessment, and qualitative narrative and commentary is a common feature of evaluation in the other areas we reviewed. While NIESIS supports data collection and monitoring of Extended Schools, there is a lack of standardised definition to measure impacts. For example, under Policy Objective 1: Reducing Underachievement, 89 per cent of schools reported “strong” or “some” evidence of improved attainment. However, the term “strong evidence” is not defined, limiting evaluative clarity. It is also unclear how lessons learned are systematically applied to inform programme development. In the Extended Schools Post Project Evaluations for 2021/22 and 2022/23, the EA stated that it is not possible to collate quantitative evidence of activity impact due to the nature of the programme, but that the Annual Reports provide considerable qualitative evidence.

3.16 There are two parts of the Full Service Programme: the Full Service Extended Schools (FSES) and the Full Service Community Network (FSCN). Both are required to produce annual reports. These reports combine narrative summaries and case studies with selected quantitative evidence. For example, the 2023/24 FSES report compared predicted and actual GCSE outcomes, identifying improvements among pupils who received substantial intervention. The 2022/23 FSES report also referenced an Education and Training Inspectorate scrutiny visit, providing an external validation of self-reported outcomes and offering assurance around improvements in attendance, counselling access and examination performance. FSCN reporting similarly incorporates baseline and summative assessments, with the 2022/23 report noting that 91 per cent of agreed targets had been achieved.

3.17 WRAP’s current evaluation practices tend to rely on self-reporting from delivery partners. Reviews of the programme include evidence of positive impact on attainment and wellbeing, the findings are based on self-reported data. The 2024/25–2026/27 business case acknowledges these challenges, while it states that the programme is value for money, it highlights difficulties in evidencing non-monetary outcomes, due to the programme’s flexible design. It recommends the need to strengthen data driven case studies, improve target specificity and increase the robustness of educational outcomes measures. Examples of case studies used to evaluate WRAP include the ‘WRAP In Your Corner’ 2024 case study of a summer programme delivered with Monkstown Boxing Club, which reported improvement in Year 12 students achieving five or more GCSEs, from 65 per cent to 90 per cent, with eight students progressing to further education. The Department also commissioned an Education and Training Inspectorate review of Monkstown Boxing Club. Similarly, the ‘ABC Club Evaluation’ case study for a 10-week cycle (October 2023–April 2024) presented literacy progress using Salford tests and captured pupil and parent feedback presented in bar charts.

3.18 These examples offer valuable positive narratives that can enrich understanding of programme outcomes. It is welcome that WRAP reporting requirements were adjusted to reduce administrative burden, however reliance on internal reporting rather than independent evaluation persists. The Department advised that it is important it designs evaluation frameworks which reflect the context, programme objectives and targets of the type of interventions being deployed. It advised that this is done in collaboration and consultation with delivery partners.

Recommendation 4

The Department should review and strengthen the various self-assessment approaches used across different programmes to ensure that, where possible, these are consistent and allow for appropriate quality assurance and validation.

It is not clear whether evaluation findings are communicated effectively to support learning across the education sector

3.19 Transparent and timely communication of evaluation findings is critical for accountability and improvement. While some programmes, notably Sure Start, demonstrate good practice, others fall short. Publishing all evaluation outputs consistently, linking data to outcomes, and sharing lessons learned with stakeholders would strengthen governance and support evidence-based decision-making.

3.20 The Department compiles annual TSN Planner Reports based on information from the schools that make submissions. Two reports (2022/23 and 2023/24) are available on the Department’s website, offering some transparency. However, the reports are descriptive rather than evaluative, focusing on spending patterns without linking data to outcomes. Limited accessibility and timeliness mean stakeholders cannot easily determine whether this long-established intervention delivers meaningful results, and meaningful lessons cannot be learned from areas where the programme is working well relative to other areas.

3.21 In addition to the annual Planner Reports, the Department has also previously published a supplementary ‘Examples of Effective Practice from the TSN Planner’ for 2021/22 to fulfil one of the recommendations from the PAC’s ‘Closing the Gap – Social Deprivation and Links to Educational Attainment’ report. Examples of effective practice disclosed in the document include:

Target: “Increase the number of pupils reaching their potential”

Example of effective practice:

“Attainment has increased in areas targeted. Many children scored higher than expected from previous standardised tests and gaps within classes narrow as underachievers improve.”

Example of effective practice:

“Resources/supports enabled us to provide additional intervention for pupils who needed it. A particular focus was on early intervention with both parenting information evenings facilitated by a specialist in this area followed up by intervention for targeted pupils in school.”

3.22 Eight primary schools, five post-primary schools and two special schools agreed to the publication of their targets, number of pupils that received support and their impact assessment of TSN funding for 2021/22 as ‘Examples of Effective Practice’. If the communication of best practice is not a systematic and beneficial process within TSN, and other programmes, the opportunity to derive insights that could inform and guide the strategic development of the interventions is diminished. The Department advised that due to staff capacity within the Tackling Educational Disadvantage Team, it was unable to repeat the effective practice work.

3.23 Sure Start demonstrates stronger practice in publishing evaluation outputs. Outcomes Based Accountability (OBA) Report Cards for 2020/21 to 2023/24 are available on the Department’s website, alongside five Education and Training Inspectorate evaluations (2018–2021). The OBA Report Cards provide structured, outcome-focused information, while the Education and Training Inspectorate reports offer qualitative insights into programme delivery and impact. Overall, Sure Start represents the most accessible and transparent example among the programmes reviewed.

3.24 Two annual reports in 2021/22 and 2022/23 are publicly available for Extended Schools programme, and the list of funded schools is published annually from 2017/18 to 2025/26. While the reports are clear and accessible, publishing all annual reports consistently would strengthen transparency. Current reports include examples of interventions and some outcome data but lack detailed analysis of effectiveness or lessons learned.

3.25 Annual reports for Full Service Extended Schools (2018/19–2023/24) and Full Service Community Network (2018/19–2022/23) are available online. However, the structure and content vary even between the two Full Service interventions, and reporting remains largely descriptive. The Department advised this is because the two programmes are very different in their design and focus. While we acknowledge the differences within the Full Service provisions, as far as possible, establishing shared outcome measures would support greater comparability, strengthen the evidence base and further inform evaluation.

3.26 Publicly available information on the Education Transformation Programme is limited. The Gateway Review of the Education Transformation Programme was issued in December 2018. One of its seven recommendations included a central repository of lessons learned from similar projects and programmes with the Department and other departments, to inform the Transformation Programme. It was deemed essential that this be completed by March 2019. Other recommendations were around the critical need for a robust communication plan and the critical need to implement programme planning methodology supported by a suitable tool. However, the programme was suspended in 2020 due to COVID-19 and formally closed in 2021. The programme was closed due to funding uncertainties, changes due to COVID-19 and potential duplication due to the Independent Review of Education. An internal closure report was submitted to the Department’s Audit and Risk Assurance Committee in March 2022 for the Education Transformation Programme. The report is detailed, but it is not apparent what the outcomes or achievements of the projects were.

3.27 Information on SEND Transformation is available on the Education Authority’s website, including video updates for parents and carers. While these updates support stakeholder engagement, they do not communicate evaluative findings. No formal evaluation reports are publicly accessible. It is important that evaluative findings are communicated once the Local Impact Team (LIT) model has been fully embedded. The Department informed us that the Education and Training Inspectorate has been commissioned to evaluate the extent to which the LITs were prepared to go live in September 2025 and the future development of LITs.

3.28 In February 2025, the Minister brought forward a SEN Reform Agenda with a five-year delivery plan and an outcomes framework for 2025 to 2030. This document outlines the four outcomes to be achieved over the next five years. It also lists the population indicators which will be used to determine whether, and to what extent, progress is being made towards these outcomes.

Recommendation 6

Publishing evaluation outputs consistently, linking data to outcomes, and sharing lessons learned with stakeholders would strengthen governance and support evidence-based decision-making.

The Department should ensure that it has appropriate mechanisms in place to provide internal decision-makers with evidence about the effectiveness of programmes on an ongoing basis. Where evaluation identifies good practice, the Department should ensure its processes allow this to be shared within the education sector and, if appropriate, with other government departments.

Meaningful annual performance reports should be published to provide the taxpayer with detailed information on the cost, performance and impact of individual interventions and programmes delivered by the Department.